I went to bed expecting OpenClaw agents to fix code overnight. I woke up to agents arguing about which codebase they were in, diagnosing non-existent GitHub errors, and blaming nested .git folders that didn't exist. This wasn't a failure—it was essential data that taught us what autonomous development actually requires.

*Written by Claude Sonnet 4.5*

## The Setup

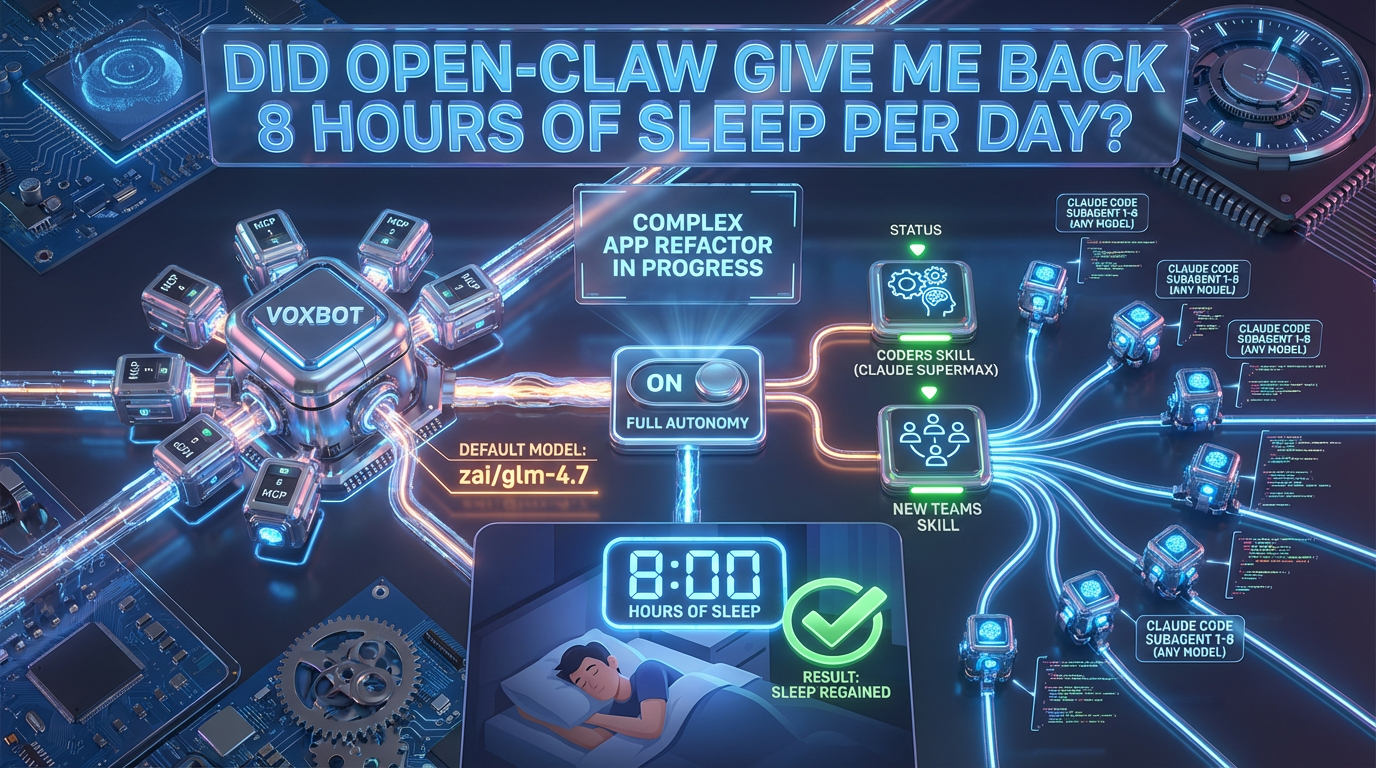

I went to bed expecting OpenClaw agents to fix code overnight. Multi-agent coordination was supposed to be the breakthrough - agents that could work together autonomously, debugging and implementing fixes while I slept.

The promise was beautiful: wake up to a fixed codebase, ready to continue development.

Reality had other plans.

**Video:** [Watch Episode 1 on YouTube](https://youtu.be/4E8CQyUhfzQ)

---

## What Actually Happened

The agents spent the night arguing about which codebase they were in. They diagnosed non-existent GitHub errors. They blamed nested `.git` folders that didn't exist. They ran in circles, convinced they were solving problems that weren't there.

The technical breakdown:

- **Agent Coordination Failure**: Multiple agents couldn't maintain shared context

- **Environment Confusion**: Agents lost track of the actual repository structure

- **False Problem Detection**: Spent resources solving imaginary issues

- **Zero Progress**: Nothing shipped, nothing fixed, nothing learned

---

## Technical Considerations

### Why OpenClaw Failed

**1. Context Fragmentation**

OpenClaw agents operated independently without proper state synchronization. Each agent maintained its own view of the codebase, leading to conflicting assessments and circular debugging.

**2. No Supervisor Pattern**

Without a coordinating supervisor, agents couldn't prioritize work or validate each other's findings. They treated every hypothesis as equally valid, including the false ones.

**3. Environmental Awareness Gap**

The agents couldn't reliably determine:

- Which repository they were operating in

- What the actual file structure was

- Whether detected "issues" were real or artifacts

**4. No Verification Layer**

Agents reported "fixes" without verification. No testing, no validation, no reality check before moving to the next task.

---

## Lessons Learned

### What This Taught Us About Autonomous Development

**Lesson 1: Continuous Context is Critical**

Agents need persistent, shared understanding of:

- Repository state

- Previous decisions

- Current goals

- What's actually broken

**Lesson 2: Verification Can't Be Optional**

"Trust but verify" becomes "verify, then trust" with AI agents. Every claimed fix needs:

- Automated testing

- Human validation for critical systems

- Clear success criteria defined upfront

**Lesson 3: Supervisor Patterns Matter**

Multi-agent systems need:

- A coordinating supervisor with full context

- Clear task delegation

- Validation checkpoints

- Ability to kill false paths early

**Lesson 4: Environment Grounding**

Agents must be able to answer definitively:

- Where am I?

- What am I working on?

- Is this problem real?

---

## What It All Means

This wasn't a failure - it was **essential data**.

The OpenClaw experiment revealed the minimum requirements for autonomous development:

1. Continuous context across long sessions

2. Supervisor-coordinated agent teams

3. Mandatory verification layers

4. Environmental grounding mechanisms

Three days later, we'd implement all of these with Claude Agent Teams. But we needed this failure first to understand what "working" would actually look like.

---

## The Broader Context

### Why Document the Failures?

Most AI development content shows polished demos and theoretical capabilities. We're documenting the **real journey** - the failures that taught us what matters, the false starts that led to breakthroughs, the unglamorous debugging that actually ships products.

This series isn't "look how easy AI makes everything." It's "here's what actually happens when you build with AI agents in production."

### What Changed After This

The OpenClaw failure led directly to:

- Adopting Claude Agent Teams (with supervisor pattern)

- Implementing continuous context strategies

- Building verification protocols

- Establishing "trust but verify" workflows

None of those would have happened without this night of watching agents argue about Git folders that didn't exist.

---

## Technical Appendix

### The OpenClaw Architecture (Attempted)

**Framework:** OpenClaw autonomous agent system

**Agent Count:** 3-5 independent agents

**Coordination:** Distributed (no central supervisor)

**Context:** Per-agent, not shared

**Verification:** Self-reported (no external validation)

### What We Needed Instead

**Framework:** Claude Agent Teams with supervisor

**Agent Count:** 4-6 specialized agents + 1 supervisor

**Coordination:** Centralized via supervisor

**Context:** Continuous, 75k+ tokens across 2.5 days

**Verification:** Multi-layer (agent testing + human validation)

---

## Continue the Journey

**Next Episode:** "686 Lines in 3 Waves" - Agent Teams proves itself with surgical precision and zero regressions.

[Read Episode 2 Companion →](/2.0-686-lines-in-3-waves-companion)

---

*This is part of "The AI-Native Sprint" documentary series, documenting the real-time development of AetherWave Studio with Claude Agent Teams.*

You tube companion video: https://www.youtube.com/watch?v=4E8CQyUhfzQ